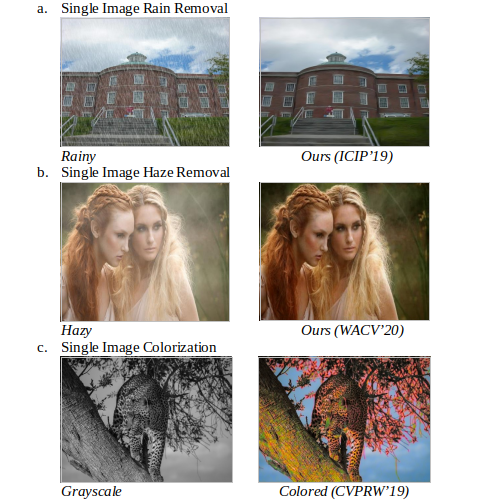

Outdoor images or videos are often deteriorated due to the presence of atmospheric noise. The characteristics of such noise span from periodic or pseudo-periodic to exponentially varying with the pixel's depth. For example, adverse weather conditions, such as rainy and haziness in the images or videos. While the rain-streaks depicts the pseudo-periodic nature, the haziness varies exponentially with the depth of the pixel. These degradations may cause problems in real-time applications such as Surveillance, SLAM related autonomous vehicle motion, UAV & Satellite Imaging (Satellite Optical Images), and the Missile communication system (Target Object Detection). The proposed work aims to restore the degraded images or videos and can be applied to any of the tasks mentioned above. With the evolution of Deep Learning, several methods have been proposed for such type of image/video restoration tasks. However, the availability of realistic data and adapting to the realistic noise is still a major challenge to address. Besides this, the restored images suffer from severe visual artifacts such as color degradation and halo artifacts.

[1]. Andrey Ignatov, Jagruti Patel, Radu Timofte,......,Sujoy Ghosh, Prasen Kumar Sharma, Arijit Sur, "AIM 2019 challenge on Bokeh Effect Synthesis: Methods and Results", 2019 IEEE/CVF International Conference on Computer Vision Workshop (ICCVW)

[2].Shuhang Gu, Radu Timofte, Richard Zhang, .. Prasen Kumar Sharma.., Arijit Sur and Gokhan Ozbulak. Ntire 2019 challenge on image colorization: Report. The IEEE Conference on Computer Vision and Pattern Recognition (CVPR) Workshops, June 2019, California, USA

[3].Prasen Kumar Sharma, Priyankar Jain, Arijit Sur ,"Dual-Domain Single Image De-Raining Using Conditional Generative Adversarial Network", ICIP 2019

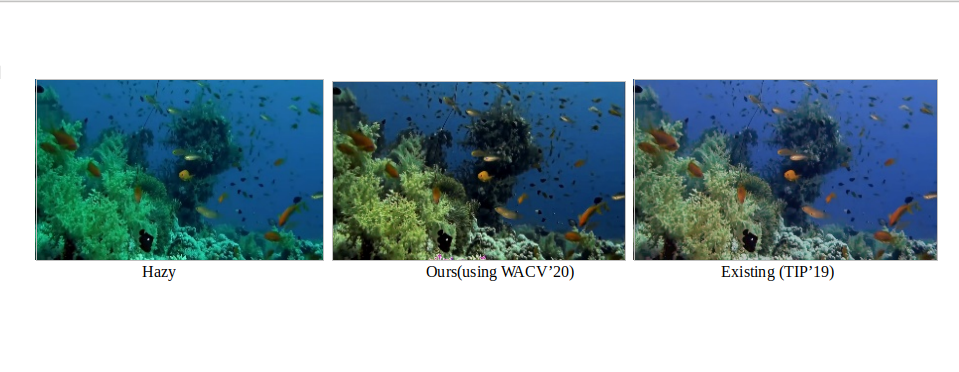

The problem of underwater image enhancement has been given considerable importance in recent times due to its vast application area in marine engineering and aquatic robotics. The two foremost reasons that make the underwater image restoration difficult are scattering and color distortion. Similar to the problem of single image dehazing, underwater image dehazing also consists of two major phases : (1) Removal of haze, followed by the post-processing step, and (2) Color correction. Mathematically, the phenomenon of underwater image dehazing based on the transmission-based haze segmentation can be written as H(x) = C(x)T(x) + L(x)(1 - T(x)), where H, C, T, and L denote Hazy, Clean, Transmission map, and Atmospheric light map, respectively. The deep learning-based method proposed in Sharma et al. (WACV’20) can be used to generate the de-hazed images in the underwater scenario, as shown in the following figure.

[1].Prasen Kumar Sharma, Priyankar Jain, and Arijit Sur. "Scale-aware Conditional Generative Adversarial Network for Image Dehazing". Winter Conference on Applications of Computer Vision (WACV 2020), USA.

Steganography and steganalysis in digital images have been studied for decades. Steganography is an art of hiding secret information in digital images so that it cannot be perceived by the human-visual-system(HVS). On the other hand, steganalysis is the complementary process of steganography that investigates the given image with intent to detect the trace of hidden information in the image. Recently, deep learning has been explored for steganography and steganalysis. The main goal of steganography and steganalysis using deep learning is to train deep learning-based models to efficiently learn to hide given secret information within the image and to detect the trace of the hidden information in the given image, respectively.

[1]. Brijesh Singh, Prasen Kumar Sharma, Rupal Saxena, Arijit Sur, Pinaki Mitra, "A New Steganalysis method using Densely Connected ConvNets", Premi 2019

[2]. Ritvik Rawat, Brijesh Singh, Arijit Sur, and Pinaki Mitra, "Steganalysis for Clustering Modification Direction Steganography", Multimedia Tools and Applications (2019) - Springer

This problem aims to restore the high-resolution image or video from its low-resolution counterpart. The easiest way to get the super-resolved version of a low-resolution video sequence is to apply super-resolution technique to every single frame, individually. However, this may destroy the inherent temporal details in video sequences, especially motion, that may introduce super-resolved videos' with flickering effects. So, in Video SR approaches, in addition to spatial, the motion information between consecutive frames is also essential. Hence, to formally define the task of Video SR, given a set of low-resolution frames, the aim is to produce a corresponding set of high-resolution frames. We have utilized various deep learning-based techniques, and the work is currently under progress.

Satellite Image Processing is an important field in research and development and consists of the images of earth and satellites taken by the means of artificial satellites. The satellite imagery is widely used to plan the infrastructures or to monitor the environmental conditions or to detect the responses of upcoming disasters. In MM lab we are focusing on various computer vision applications on aerial and satellite data with Deep Learning. This covers the aerial/satellite semantic segmentation, image translation, super-resolution, colorization, object Detection and tracking.

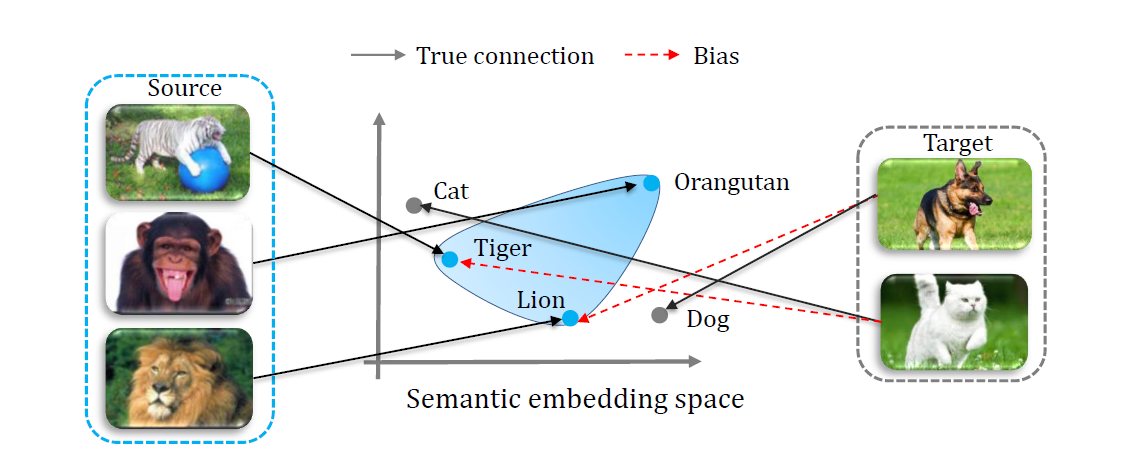

Most machine-learning methods focus on classifying instances whose classes have already been seen in training. In practice, many applications require classifying instances whose classes are not included in the training data, simply because labeled data is harder to find or it is expensive and time-consuming to label them. Zero-shot learning (or ZSL) is a powerful and promising learning paradigm, in which the classes covered by the training instances and the classes we aim to classify are disjoint. In Computer Vision, ZSL has been widely used in various image-related applications such as image recognition, image segmentation, pose estimation, domain adaptation, and person identification among others - as well as in video-related applications like unseen action recognition, zero-shot live-stream retrieval, action localization, etc. Apart from this, ZSL has found its use in several other domains as well such as Natural Language Processing, medical imaging, activity recognition from sensor data, etc. It has increasingly become a popular research topic nowadays.

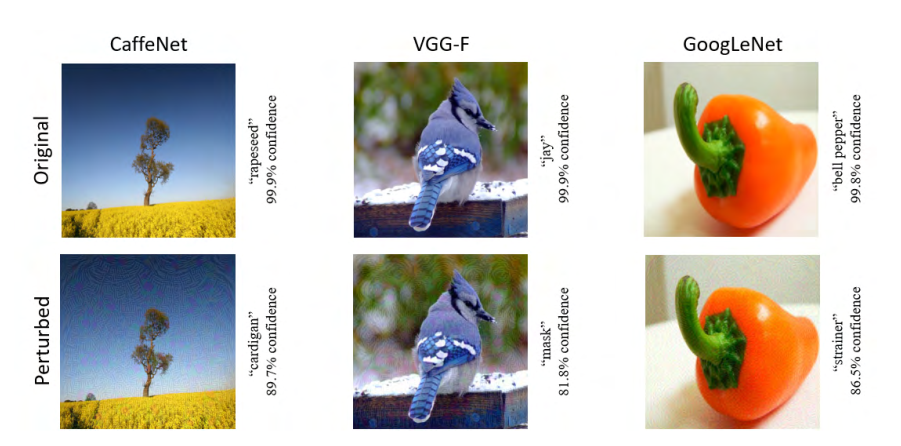

Deep neural networks have demonstrated phenomenal success in solving complex problems. However, recent studies show that they are vulnerable to adversarial attacks in the form of subtle perturbations to inputs that lead a model to predict incorrect outputs. For images, such perturbations are often too small to be perceptible, yet they completely fool the deep learning models. Hence, adversarial attacks pose a serious threat to the success of deep learning in practice. In the real world, several attacks have been demonstrated in the last few years, such as cell-phone camera attack, road sign attack, cyberspace attack, robotic vision attack, etc. Contrary to the goal of adversarial attacks, there has been quite some amount of work on defense strategies against these adversarial attacks, whose goal is to understand and/or learn the distribution of perturbations and make the classification system robust to adversarial attacks. Hence, the area of adversarial perturbations is gaining a lot of research interest from both the attack and defense perspectives.